AI Agent & LLM Evaluation

Evaluate AI agents & LLMs in production—not demos.

Most teams ship on vibes. Get a production-ready LLM evaluation framework: four layers of agent evaluation, drift detection, and metrics that tie to business outcomes.

Why LLM & AI agent evaluation is hard

To evaluate AI agents and LLMs well, you need more than accuracy. Agents combine non-determinism, tools, memory, and long-horizon reasoning—so traditional LLM evaluation metrics fall short in production.

Pain points

- “It works in staging but fails in real user workflow”

- “We improved prompt accuracy but conversion dropped”

Metrics that matter

Your AI agent evaluation framework should tie to business KPIs for production LLM evaluation.

Four layers of AI agent & LLM evaluation

A strong LLM evaluation framework goes beyond accuracy. These layers cover production AI and LLM testing end to end.

Task correctness

Did the agent complete the task correctly? Essential for any LLM evaluation—beyond single-turn accuracy.

Tool & API reliability

Did tools run as expected? Latency, errors, and correctness of tool outputs. Critical for AI agent evaluation when agents use tools.

Reasoning & consistency

Multi-step reasoning quality, coherence, and consistency across runs. Key LLM evaluation metrics for production.

Business & user impact

User satisfaction, completion rate, and downstream business KPIs. The top layer of a full agent evaluation framework.

Production-ready LLM evaluation framework

AgentX gives you an AI agent evaluation framework built for production: continuous LLM evaluation, regression and benchmark suites, and LLM evaluation metrics tied to business outcomes. Evaluate AI agents at scale.

- Continuous LLM evaluation in production

- Regression and benchmark suites for AI agent testing

- LLM evaluation metrics tied to business KPIs

- Prompt and dataset drift detection and alerting

Operationalize AI agent evaluation

Integrate LLM evaluation into your release process: run before deploy, monitor in production, iterate with a consistent evaluation framework.

Define metrics

Choose layers and KPIs that map to your goals.

Run continuously

Evaluation on every change and in production.

Act on signals

Drift alerts, A/B results, and regression gates.

AI agent & LLM evaluation FAQ

- What is AI agent evaluation?

- AI agent evaluation is measuring how well your AI agents or LLMs perform in production—beyond demos. It includes task correctness, tool reliability, reasoning quality, and business impact (completion rate, user satisfaction, drift detection, A/B testing).

- How do you evaluate LLMs in production?

- Evaluate LLMs in production with a layered framework: (1) task correctness, (2) tool and API reliability, (3) reasoning and consistency, and (4) business and user impact. Use continuous evaluation, regression suites, drift detection, and LLM evaluation metrics tied to KPIs like completion rate and user satisfaction.

- Why is AI agent evaluation hard?

- AI agent evaluation is hard because agents are non-deterministic, use tools and memory, and perform long-horizon, multi-step reasoning. Prompt drift and dataset drift make traditional accuracy metrics insufficient. You need an AI evaluation framework built for production.

Learn more: evaluation in practice

From building datasets to running evaluations and turning metrics into business value—step-by-step guides from the AgentX blog.

Create evaluation datasets

Building enterprise-grade evaluation datasets: the foundation of reliable AI agents. Realistic test cases, expected results, capabilities, and follow-ups.

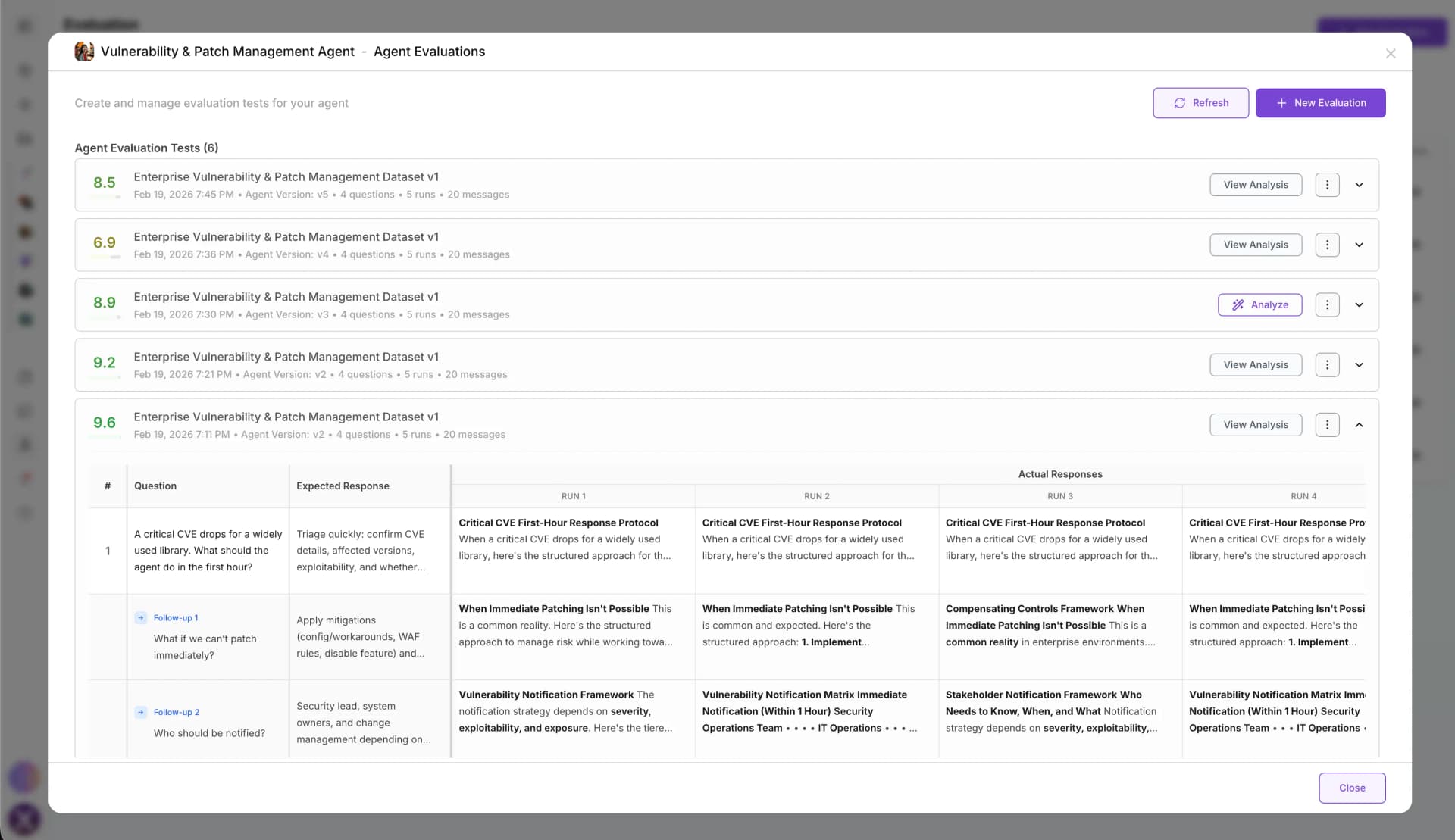

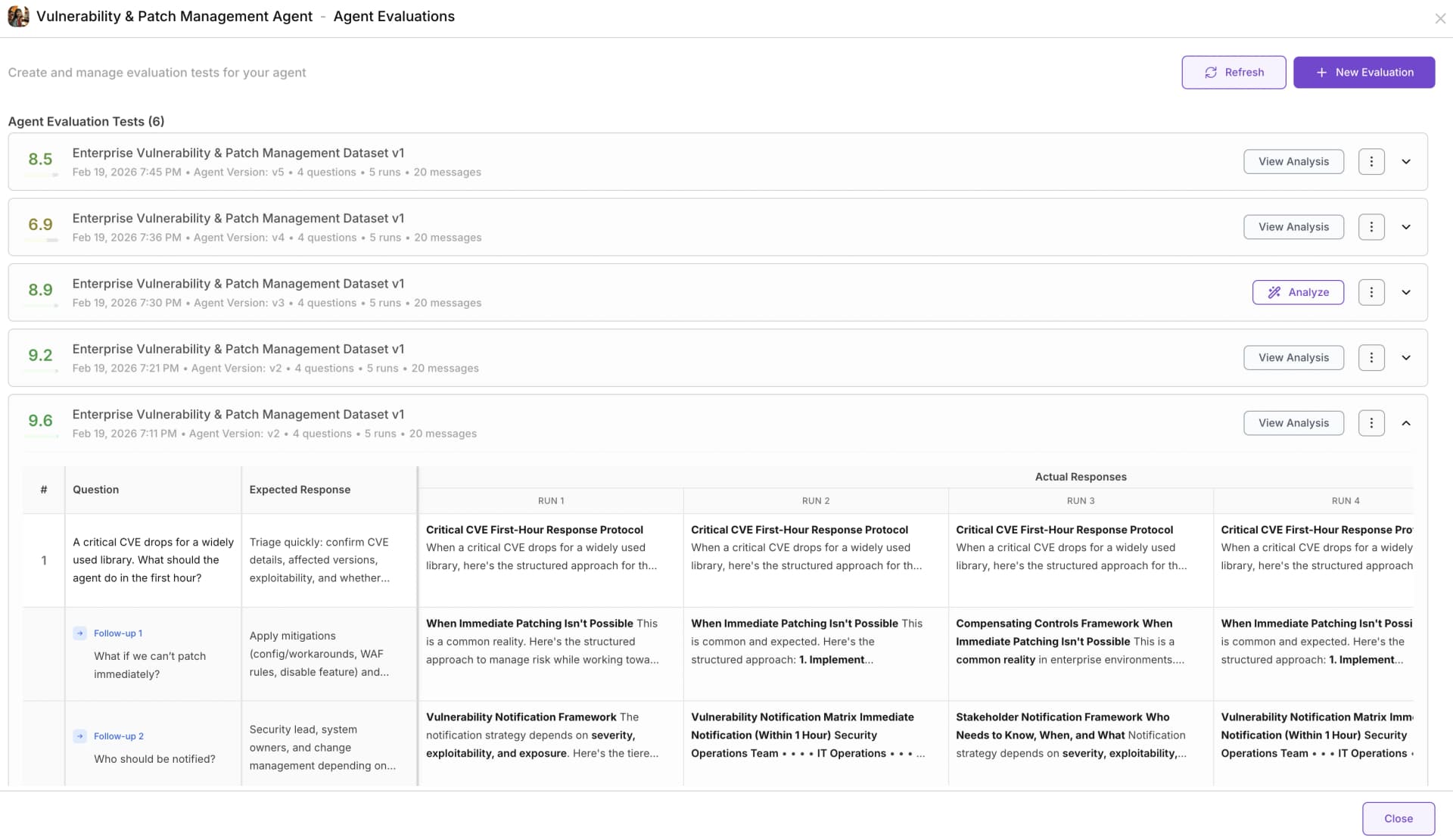

ReadPart 2Run AI agent evaluation with the dataset

From dataset to decision: running enterprise AI agent evaluations. Select your agent and dataset, run the evaluation, and get results with justifications and performance metrics.

ReadPart 3Turn metrics into business value with evaluation analysis

How to analyze, interpret, and act on AI agent evaluation results. Root-cause analysis, suggested instruction changes, re-runs to validate—turn evaluation into a release process.

ReadEvaluate AI agents in production—stop flying blind

Use the AgentX AI agent evaluation framework to turn your LLMs and agents from demos into measurable, production-grade systems.